AI Technology Transparency: Beyond Black Boxes to Trustworthy AI

Discover how AI technology can become transparent and accountable. Learn strategies to build trust, reduce risk, and scale AI responsibly in enterprises.

Beyond Black Boxes: Making AI Technology Transparent and Accountable

Table of Contents

- Introduction

- Understanding Black Box AI: Why Transparency Matters

- Why Transparency in AI Technology Is Critical for Enterprises

- Key Pillars of Transparent and Accountable AI Technology

- How Enterprises Can Make AI Technology Accountable

- Real-World Success Story: Transparent AI in Enterprise Automation

- The Role of Agentic AI in Ensuring Accountability

- Best Practices for Implementing AI Transparency in Organizations

- Global Standards and Frameworks for AI Transparency

- Business Benefits of Transparent AI Technology

- Challenges in Making AI Transparent

- The Future of Transparent AI Technology

- Build Trustworthy AI with xCroTek

- FAQ

Introduction

AI technology is transforming businesses faster than any previous digital revolution. From customer support chatbots to autonomous agents and predictive analytics, enterprises are embedding AI everywhere.

But there is a growing problem: most AI systems operate like black boxes.

They make decisions, generate outputs, and automate actions, but users and organizations often cannot explain why or how.

In today’s regulatory and trust-driven digital world, opaque AI is a business risk. Transparency and accountability are no longer optional, they are essential for enterprise-scale adoption.

At xCroTek, we work closely with organizations implementing AI technology, and one lesson is clear: visibility drives trust, and trust drives adoption.

In this article, we’ll explore why AI transparency matters, how to achieve it, and practical strategies to make AI systems accountable in real-world deployments.

What Does “Black Box” Mean in AI Technology?

A black-box AI system produces results without providing interpretable reasoning.

For example:

- A model denies a loan but cannot explain the reason.

- An AI agent changes system configurations automatically without audit logs.

- A recommendation engine influences decisions without visibility into bias or logic.

This lack of transparency creates major risks in finance, healthcare, security, and enterprise automation.

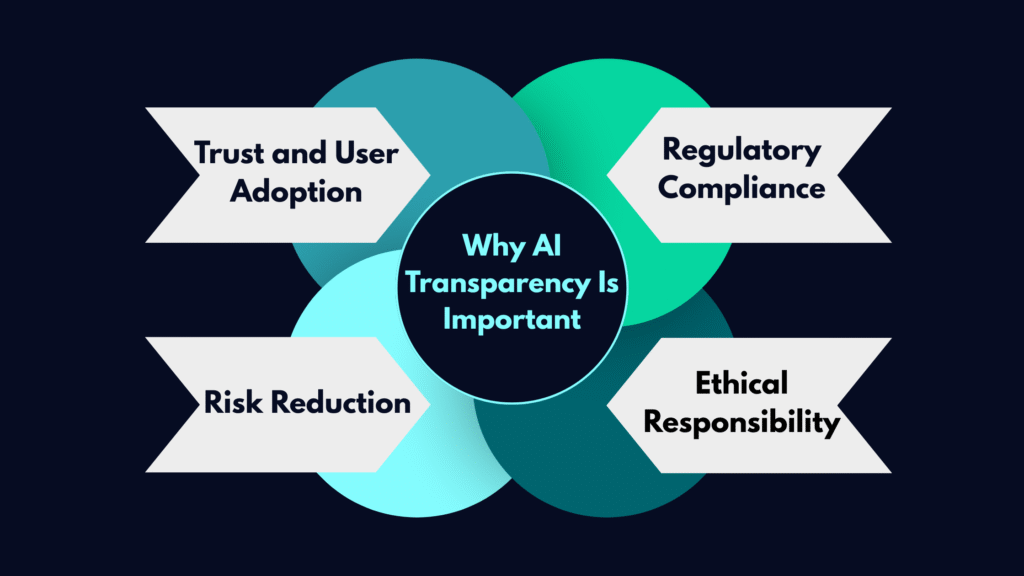

Why Transparency in AI Technology Matters?

1. Trust and User Adoption

People trust systems they understand. When AI decisions are explainable, stakeholders feel confident using them.

2. Regulatory Compliance

Governments worldwide are introducing AI regulations like:

- EU AI Act

- GDPR

- NIST AI Risk Management Framework

Transparency is a compliance requirement, not a luxury.

3. Risk Reduction

Opaque AI can produce biased, incorrect, or harmful decisions. Transparent systems allow faster detection and correction.

4. Ethical Responsibility

AI technology impacts real people. Accountability ensures fairness, privacy, and ethical use.

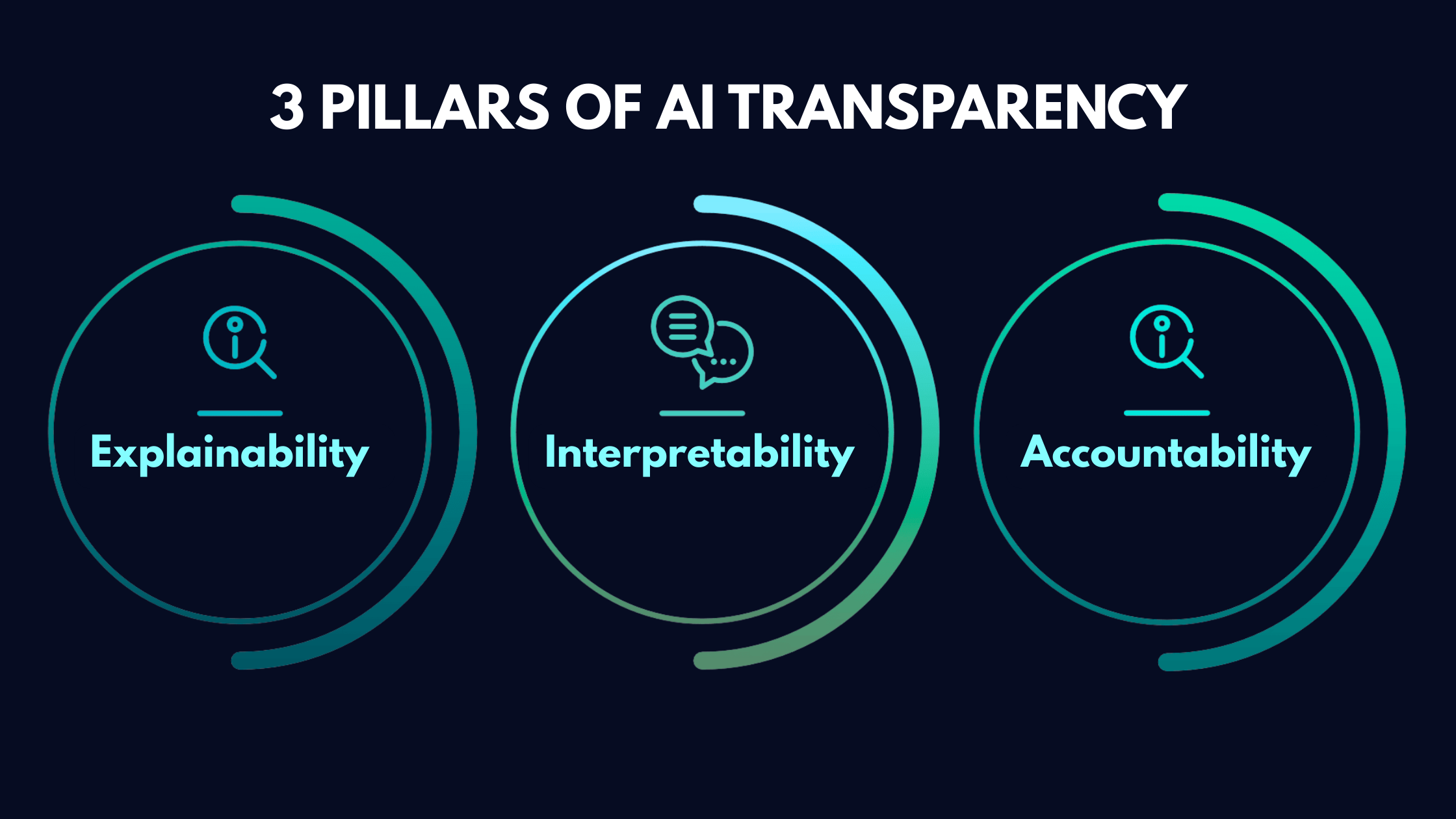

Key Pillars of Transparent AI Technology

1. Explainable AI (XAI)

Explainability is all about the ability of Artificial Intelligent Systems to provide easy-to-understand explanations for their decisions and actions. Offering clear explanations gives the customer an insight into the AI’s decision-making process. However, there are Black Box AI systems that are AI models offering results without clearly explaining how they achieved them. This poses a hurdle in user understanding of AI concepts.

2. Interpretability

Interpretability in AI targets human comprehension of how an AI model operates and behaves; with a clear focus on internal processes to reveal predictions.

3. Accountability

Accountability in AI refers to future-proofing AI systems that are held responsible for their actions and decisions. AI algorithms, or AI chatbots may go wrong while predicting business growth or recommending a product.

How Enterprises Can Make AI Technology Accountable

Build Transparent Data Pipelines

Data is the foundation of AI. Enterprises must document:

- Data sources

- Data preprocessing

- Bias mitigation strategies

- Privacy safeguards

Implement AI Decision Logs

Every AI decision should be traceable. Decision logs help in:

- Incident investigation

- Compliance reporting

- Performance optimization

Create AI Ethics Policies

Organizations should define:

- Acceptable AI use cases

- Risk classification

- Ethical guidelines

- User consent policies

Use Model Cards and Documentation

Model cards explain:

- Model purpose

- Training data

- Limitations

- Performance metrics

This documentation improves transparency for developers and stakeholders.

Real-World Example: Transparent AI in Enterprise Automation

At xCroTek, we observed an enterprise deploying AI agents for IT operations automation. Initially, the system auto-resolved incidents, but engineers did not trust it because decisions were unclear.

By adding:

- Explainability dashboards

- Action logs

- Human approval workflows

The organization achieved:

- 40% faster incident resolution

- Increased engineer trust

- Compliance-ready documentation

Transparency transformed adoption.

The Role of Agentic AI in Accountability

Agentic AI systems can autonomously plan, decide, and act. This makes accountability even more critical.

To manage agentic AI responsibly:

- Define guardrails and policies

- Monitor agent actions in real-time

- Restrict high-risk tasks

- Implement rollback mechanisms

Internal Best Practices for AI Transparency

- Use dashboards for AI performance visibility

- Provide explainable insights to users

- Regularly audit AI models

- Train teams on AI ethics

- Maintain documentation repositories

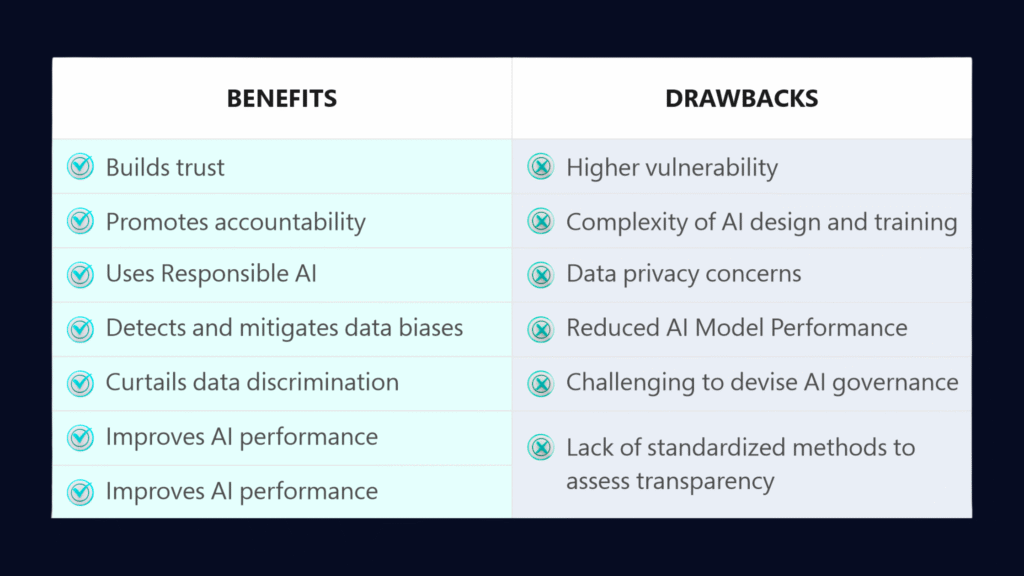

The Business Benefits of Transparent AI Technology

Increased Customer Trust

Transparent AI builds confidence in automated decisions.

Faster Enterprise Adoption

Teams adopt AI faster when they understand it.

Reduced Legal and Compliance Risk

Auditability protects organizations from regulatory penalties.

Better AI Performance

Monitoring improves accuracy and reduces model drift.

Challenges in Making AI Transparent

Despite benefits, organizations face challenges:

- Complex deep learning models

- Lack of skilled AI governance teams

- Performance vs interpretability trade-offs

- Data privacy constraints

The solution is balanced AI architecture with layered explainability.

The Future of Transparent AI Technology

The next wave of AI innovation will prioritize:

- Built-in explainability

- AI governance platforms

- Real-time compliance monitoring

- User-facing transparency dashboards

- Ethical AI certifications

Transparent AI will become a competitive differentiator.

For deeper research, refer to:

-

NIST AI Risk Management Framework: https://www.nist.gov/itl/ai-risk-management-framework

-

EU AI Act overview: https://digital-strategy.ec.europa.eu/en/policies/european-approach-artificial-intelligence

-

Google AI Principles: https://ai.google/principles/

-

IBM AI Explainability 360: https://aix360.mybluemix.net/

These frameworks define global best practices for accountable AI technology.

Conclusion: Trust is the New Currency of AI

AI technology is powerful, but power without transparency creates risk. Enterprises must move beyond black-box AI and adopt systems that are explainable, observable, and accountable.

At xCroTek, we believe transparency is the foundation of scalable, ethical, and enterprise-ready AI. Organizations that invest in accountable AI today will lead tomorrow’s digital economy.

Call to Action

🚀Want to build transparent, enterprise-grade AI technology for your business?

Contact xCroTek today to design AI solutions with built-in observability, governance, and trust frameworks.

FAQ

Q1: What is AI technology transparency?

AI technology transparency means making AI systems understandable, explainable, and traceable so users can see how decisions are made.

Q2: Why is AI transparency important for enterprises?

Transparency builds trust, ensures regulatory compliance, reduces risk, and helps organizations detect bias or errors in AI systems.

Q3: What is a black-box AI system?

A black-box AI system produces results without explaining how it reached those conclusions, making it hard to trust or audit.

Q4: How can companies make AI technology accountable?

Companies can implement explainable AI, decision logs, governance frameworks, ethical policies, and human oversight mechanisms.

Q5: What is Explainable AI (XAI)?

Explainable AI provides human-readable explanations for AI decisions, helping stakeholders understand model behavior and predictions.

Q6: Is transparent AI required by regulations?

Yes. Regulations like the EU AI Act, GDPR, and NIST AI frameworks emphasize explainability, accountability, and risk management.